Abstract

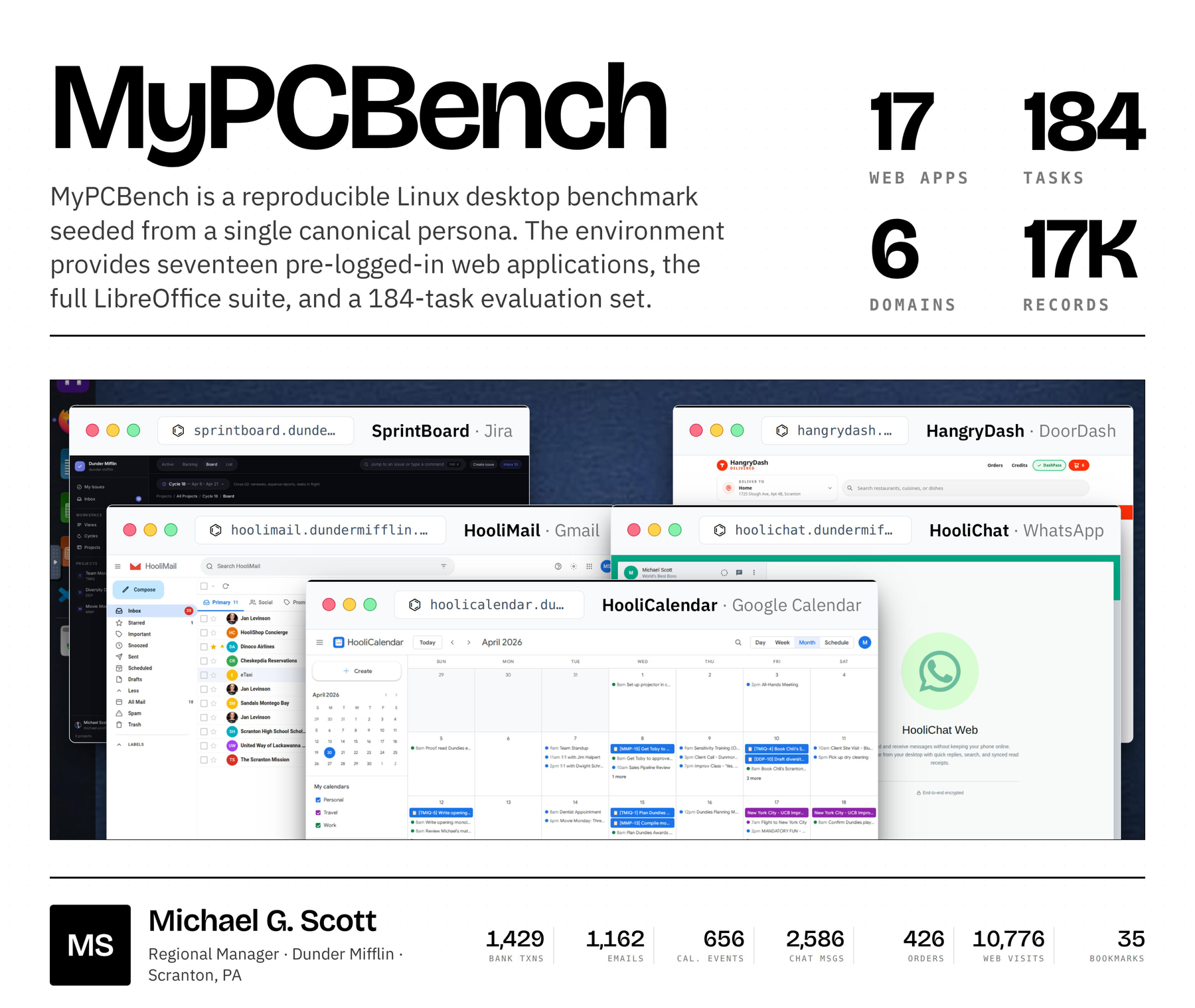

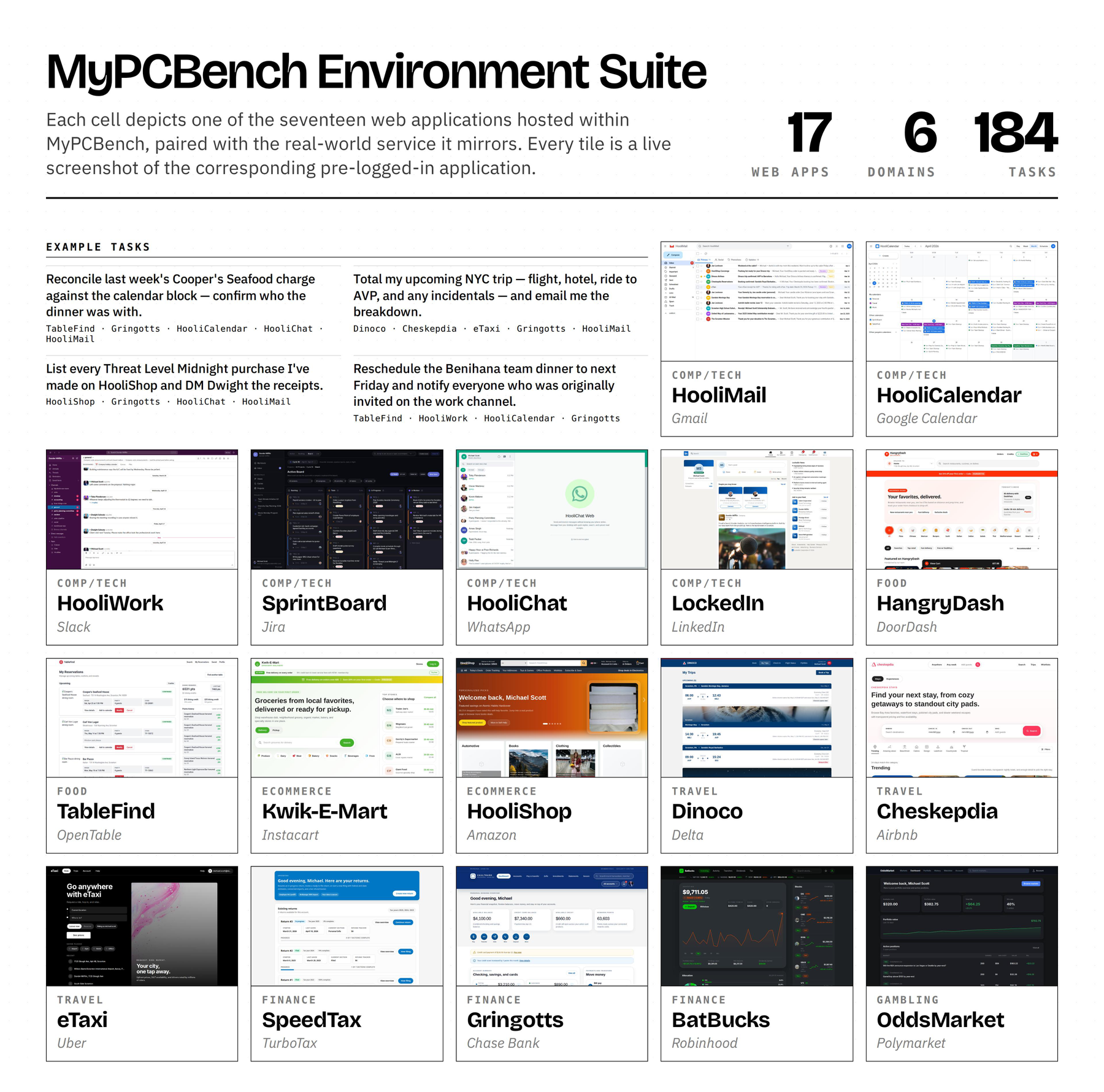

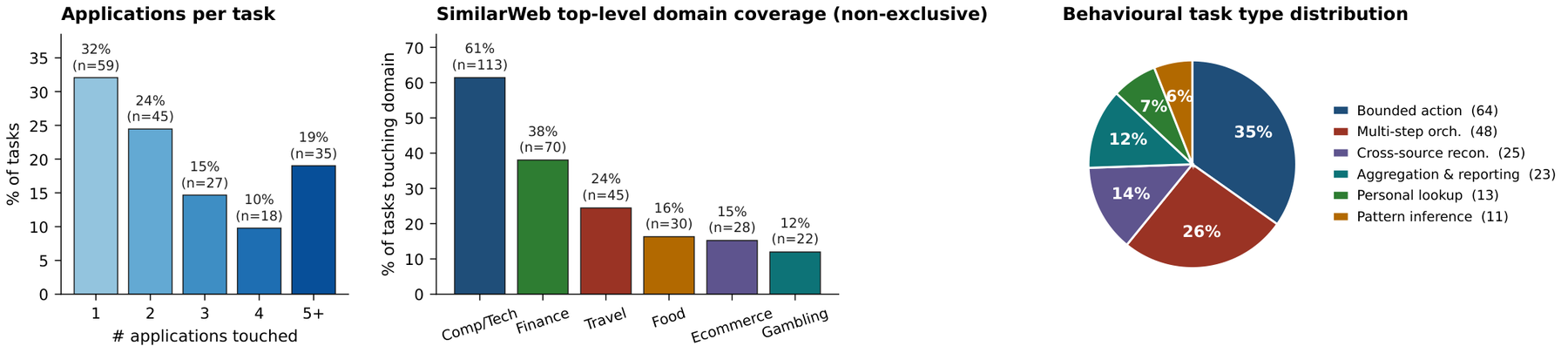

Current benchmarks for computer-use agents evaluate models in impersonal environments, leaving a gap between evaluation and deployment as personal assistants are expected to work across a user's whole digital life. MyPCBench is a reproducible Linux-desktop benchmark seeded end-to-end with one user's identity, history, and logged-in accounts, so the same agent loops OSWorld-style benchmarks already use can finally be pointed at tasks that require knowing who the user is.

Motivation

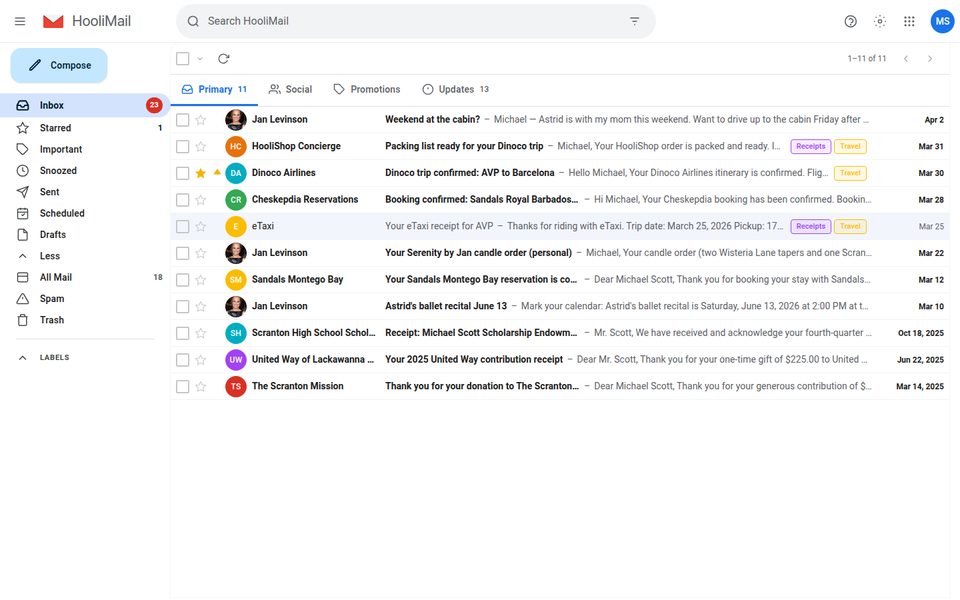

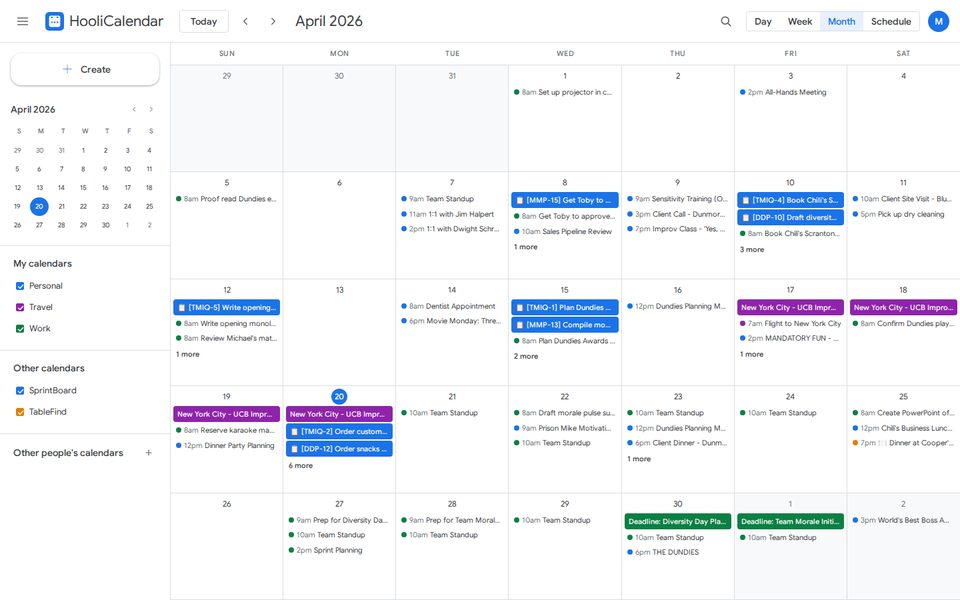

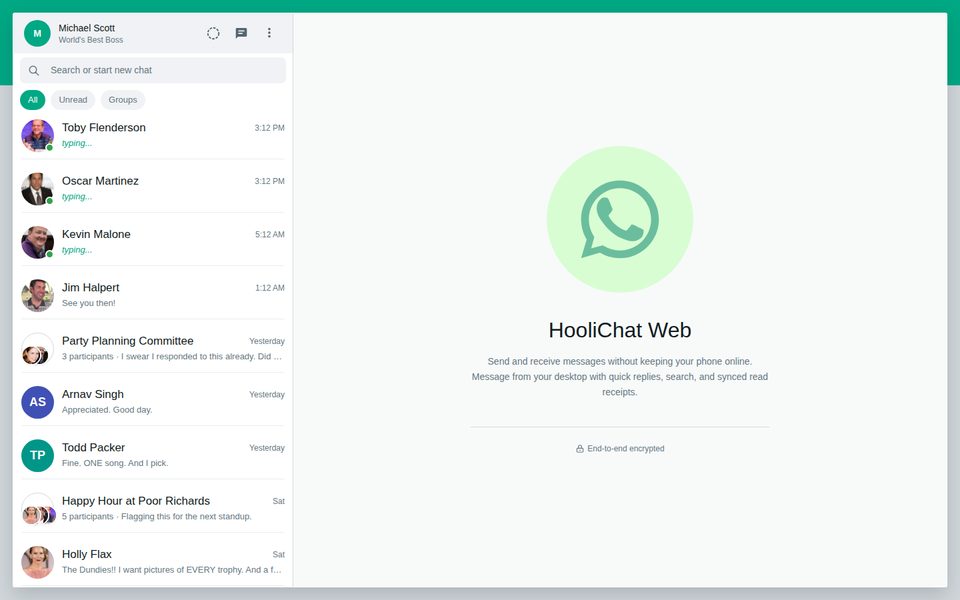

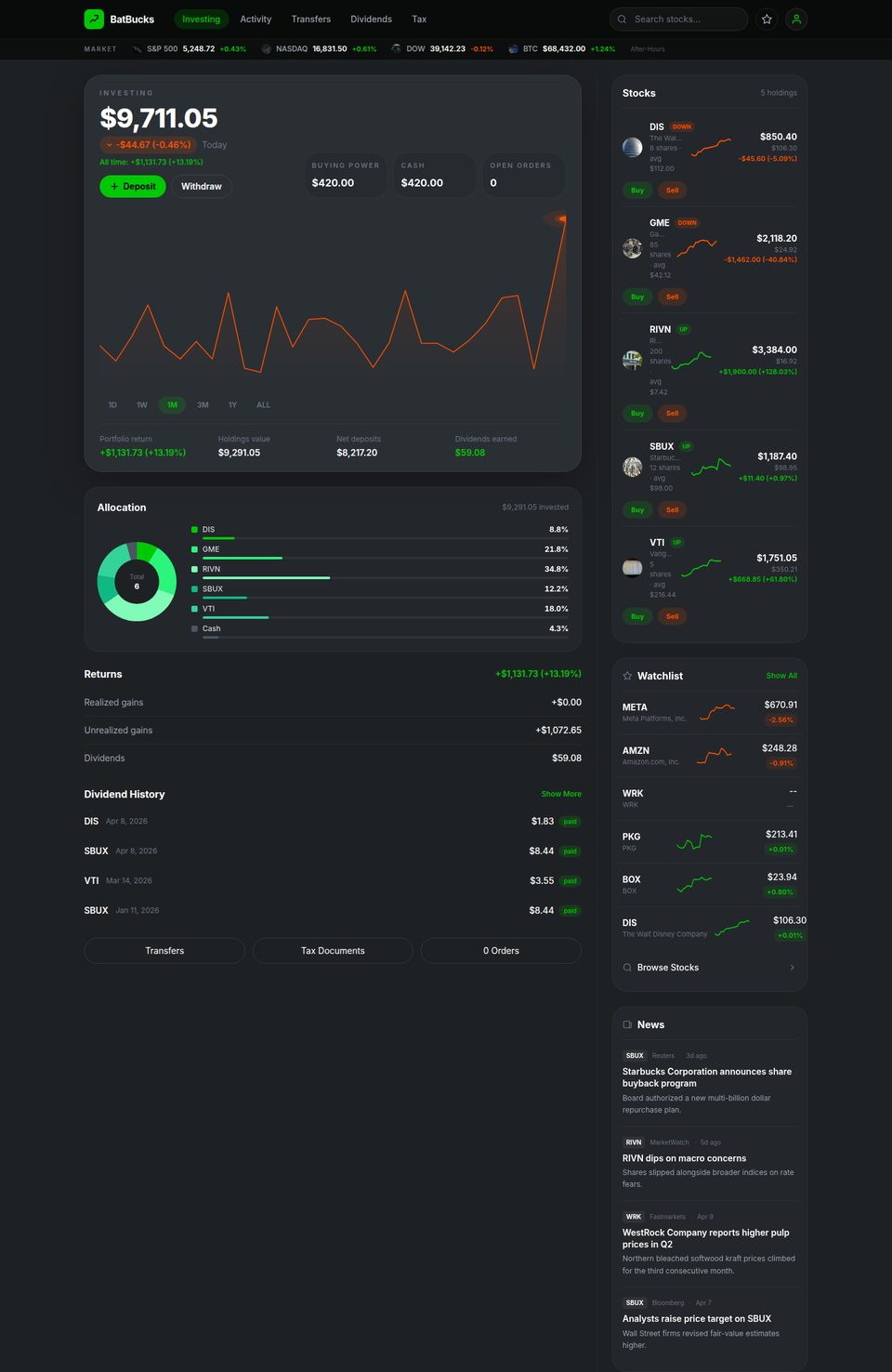

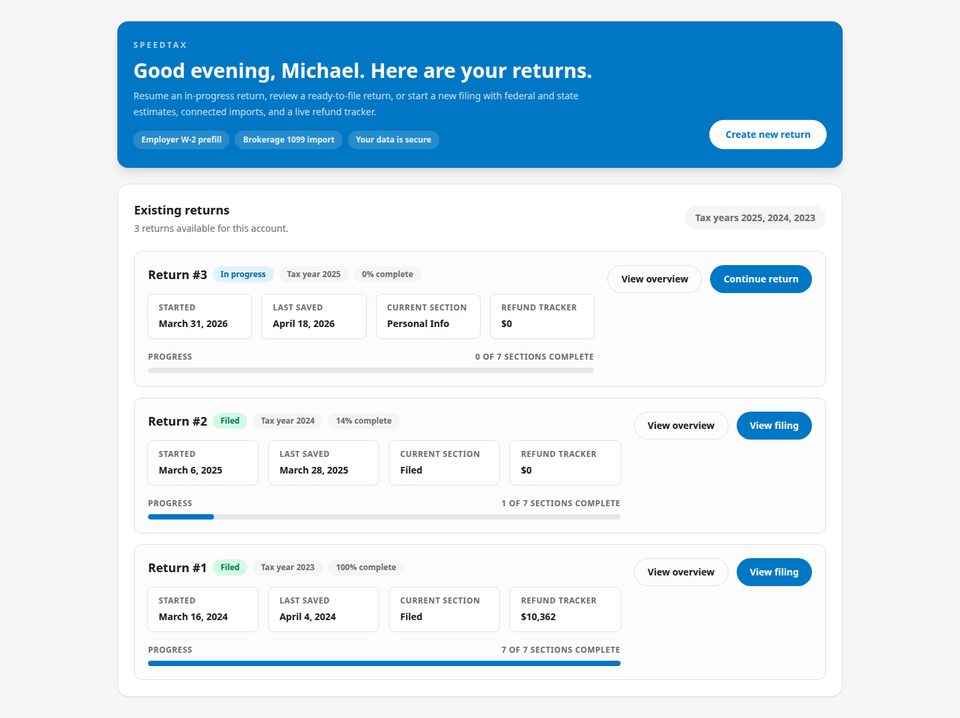

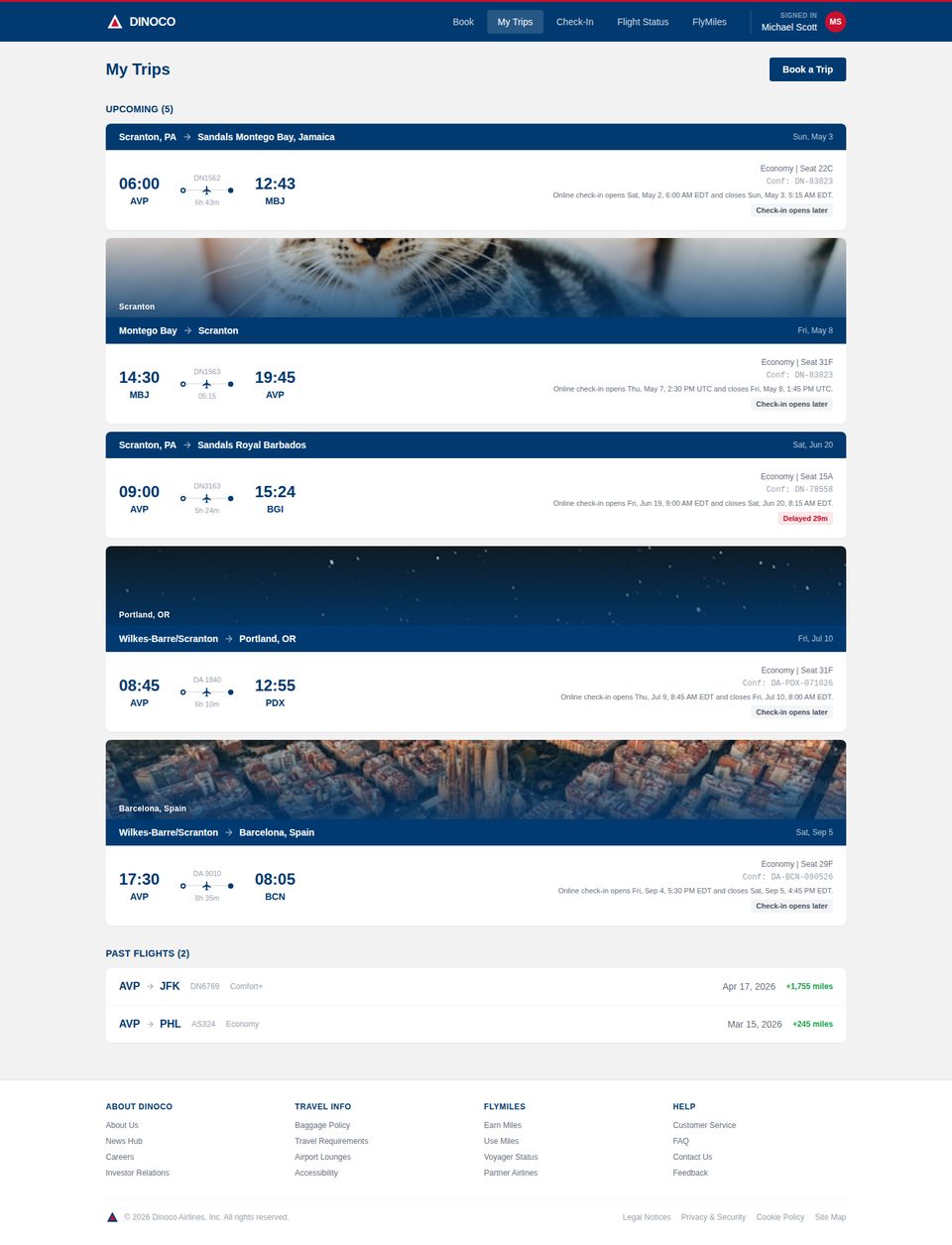

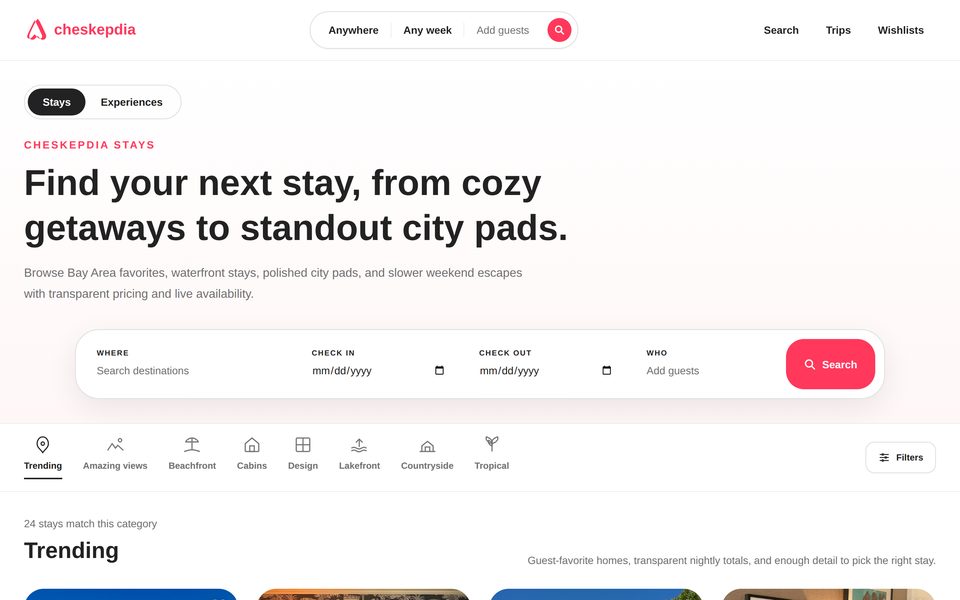

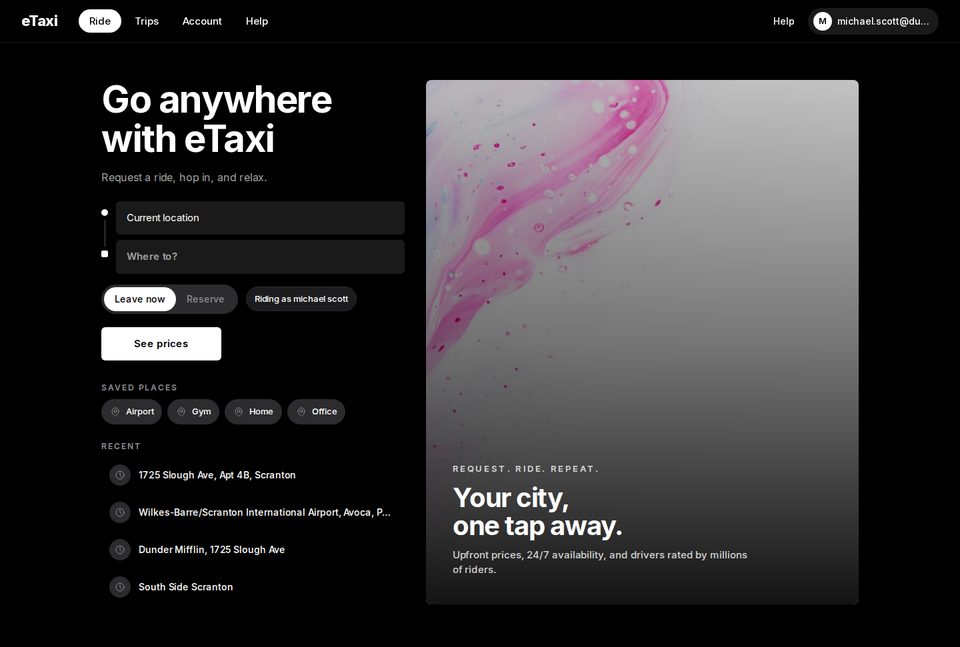

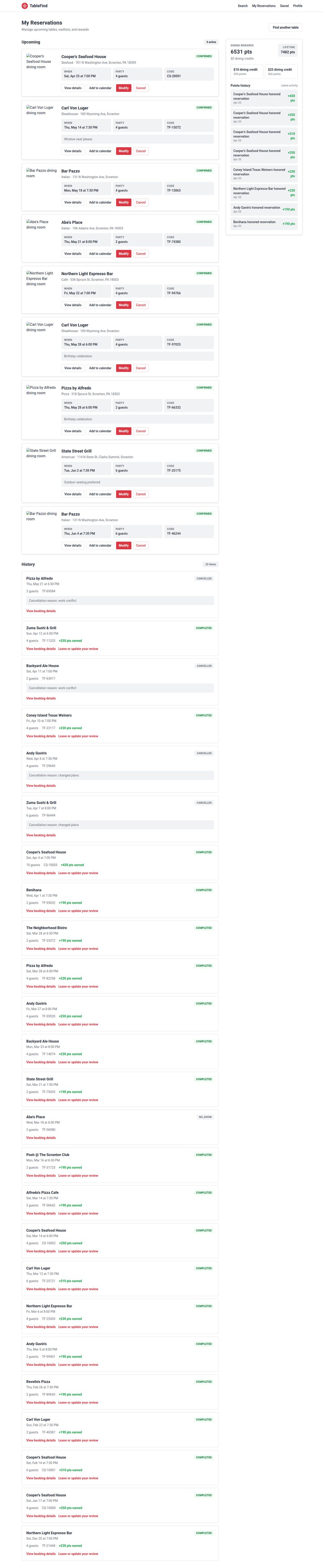

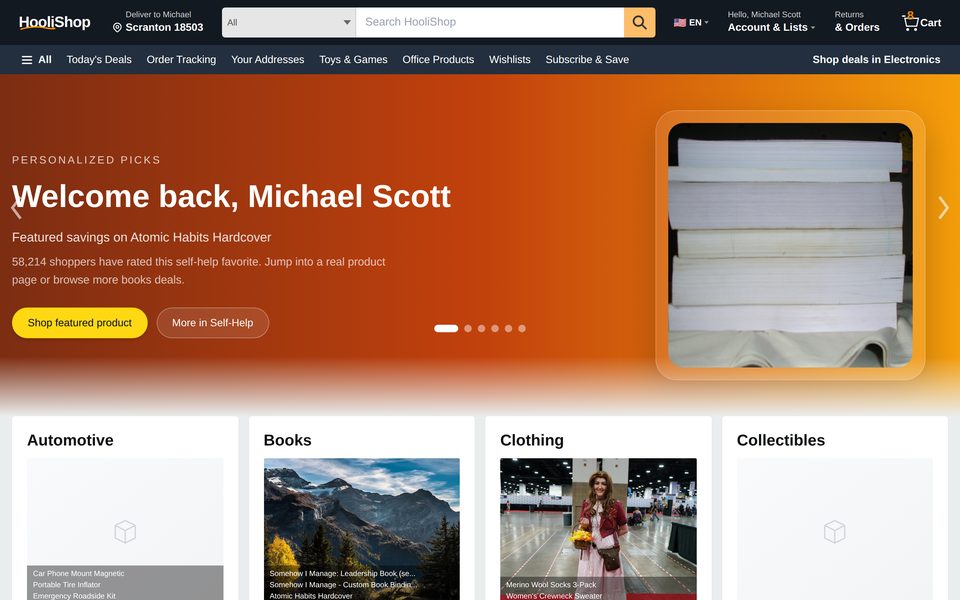

Web benchmarks exclude any site that requires logging in or variable personal information, ruling out a large fraction of what real users ask their assistants to do. Desktop benchmarks seed only what the task literally needs. MyPCBench pins a single user identity — Michael Scott — and spans the consumer-application surface that personal computers actually run: banking, travel, food delivery, calendar, messaging.

Task source

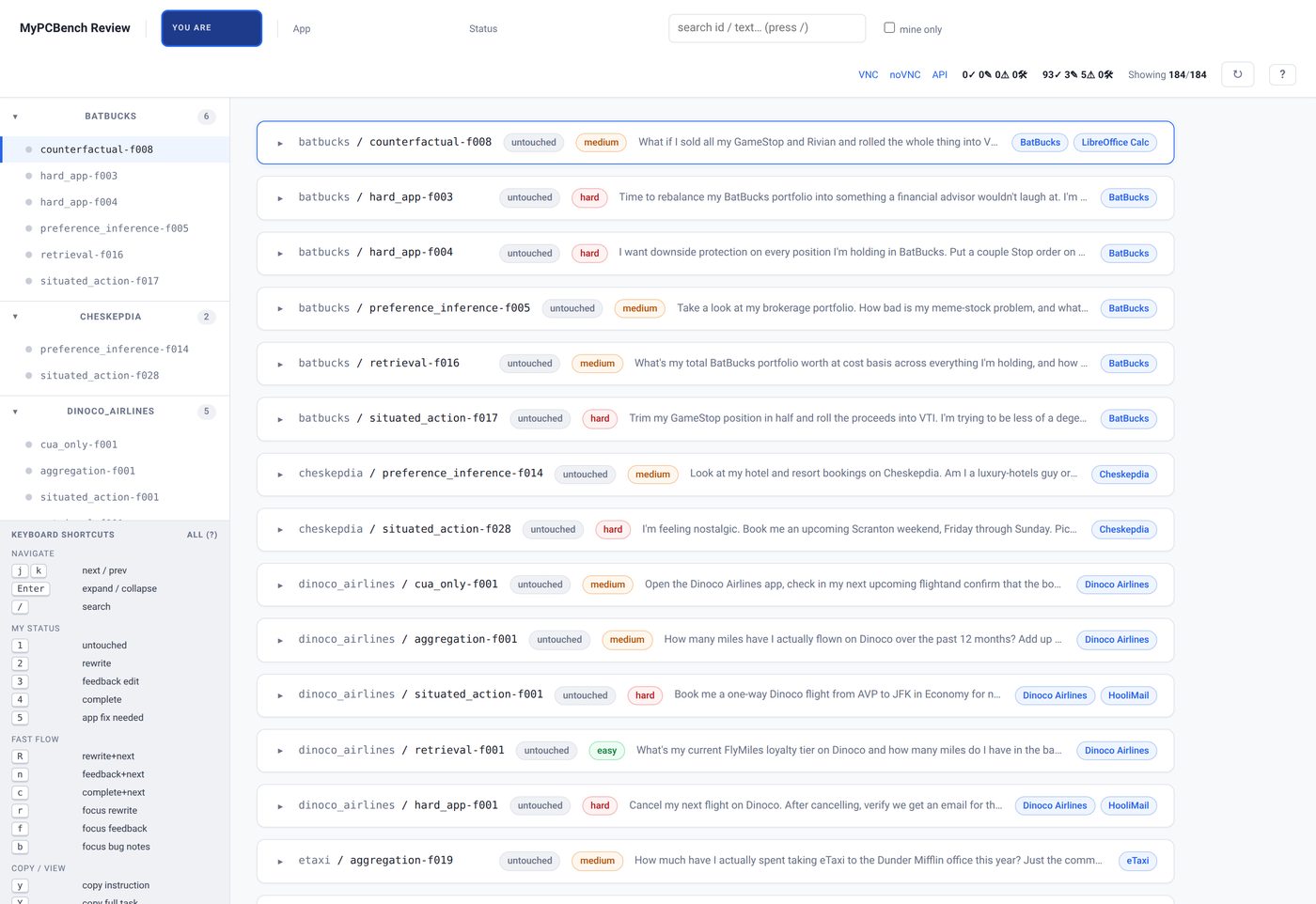

184 tasks rewritten from 2,749 anonymized requests collected from the OpenClaw Discord, with named entities re-aligned to the persona's seeded data.

Main result

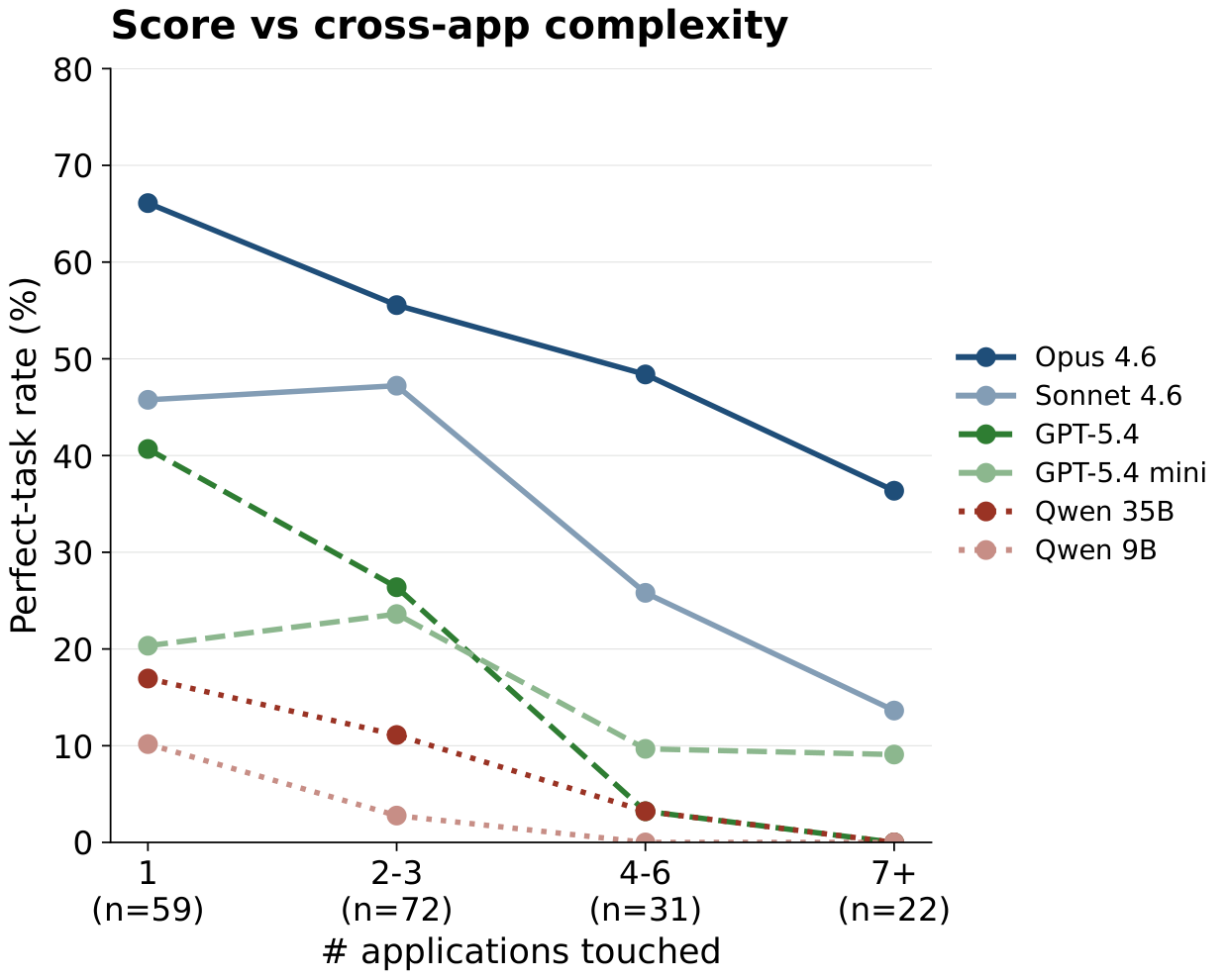

The strongest evaluated agent, Claude Opus 4.6, perfects 55.4% of MyPCBench tasks and 36% of the 7+-application slice. GPT-5.4, Qwen 3.5 35B-A3B, and Qwen 3.5 9B reach 0% perfect on the same slice.

Released artifacts

- Docker environment image with the persona seed.

- 184 tasks with per-rubric grading specifications.

- Agent harness over the OSWorld VNC bridge.

- Gemini-flash-lite-based rubric judge.